Fuzzing

First of all, what is fuzzing exactly? When we fuzz test a program or function that receives input (any type of input), we try different combinations of input until we get a crush or another desired result (frequently memory leaks). When a program doesn’t sanitize its input properly, malformed or improper input might come from the user, which can cause crashes and strange or undefined behavior. A good example of fuzzing that you probably heard of before is dirbusting, when we try different URLs, first starting with common ones until we find legitimate directories in a website. Tools like fuff do an excellent job at it.

Fuzzing is especially useful in programs written in low-level or older languages, like Basic, Assembly, C/C++, etc., that don’t prevent the programmer from writing mistakes like improper typecasting, use after free [of a pointer], or memory overflow (buffer, stack, integer), which normally don’t necessarily lead to crashes at runtime or compile-time errors and could be exploited by a shrewd attacker. Usually, when the term ‘fuzzing’ is mentioned, it refers to this type of fuzzing.

Types of Fuzzing

There are three approaches to fuzzing that we should enumerate.

Whitebox Fuzzing

By ‘whitebox fuzzing’ we refer to a type of fuzzing wherein the fuzzer attempts to analyze the internal structure of the program in order to track and maximize code coverage. Code coverage refers to the proportion of branches we reached in our code; every time a conditional statement like if or while is run, the code splits asunder into one branch where the statement is true, and another where it is false. The rationale is that if there’s an error hiding in some branch, we first must make sure that we reach that branch in the first place. To perform this kind of analysis, the program must be instrumented during compilation.

Instrumentation works by calling a special function every time a branch is run, which logs it as having been run, sometimes even to the resolution of which line, so that coverage could be tracked. Instrumentation could also help find bugs. For instance, Address Sanitation (ASan) helps find memory leaks that don’t normally cause crashes. Since full structural analysis requires a lot of resources, whitebox fuzzing is slow, but much more effective in finding bugs and vulnerabilities, especially ones hidden deep in the program. Of course In order to perform extensive instrumentation one must have access to the source code, which isn’t always available, especially as an attacker.

Blackbox Fuzzing

In this approach, on the other hand, we don’t care about the structure of the program, and treat it as a black box. Still, we can use the output of the program to try and figure out what happens inside and thus craft smarter and more efficient input. Since we avoid the overhead associated with whitebox fuzzing, blackbox fuzzing is much faster but less efficient in finding mistakes and vulnerabilities.

Graybox Fuzzing

This approach tries to strike a balance between the respective pros and cons of the aforementioned approaches; it uses light instrumentation during compile-time instead of a full analysis in order to calculate code coverage and track the program’s state. Most popular fuzzers today, like AFL, HongFuzz and LibFuzzer are graybox fuzzers. In order to execute such fuzzing, the source code is usually needed, but there are workarounds if only an executable is available, but they seriously harm performance. In this article I won’t touch on these techniques.

Techniques of Fuzzing

As in dirbusting, fuzzing need not be done using brute force, that is, trying random input. Indeed, brute force isn’t a good technique for fuzzing and this is rarely done. Fortunately, there are a number of smarter techniques that lead to better results in reasonable amounts of time and resources. Here I shall discuss two of them:

Mutation-based Fuzzing

This technique is based on greybox fuzzing. We start with legitimate well-formed input and apply a couple of types of mutations on it. Using instrumentation, we try to take the most successful mutations, which reveal new branches, and use them as seed for the next generation. This process is somewhat reminiscent of Darwinian natural selection that we see in nature. We also have the useful side effect that mutations that are filtered by the program go out of use and we are left with only working input.

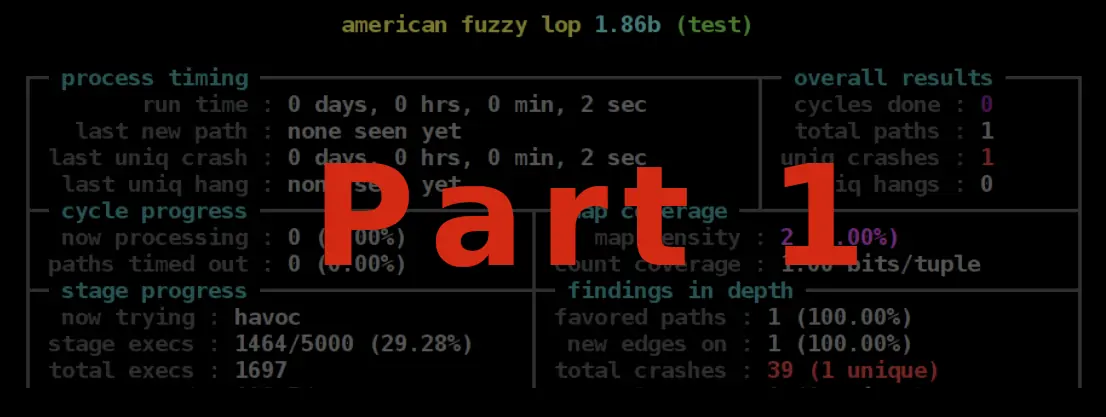

The most well-known tool (and arguably the most well known fuzzer in general) is AFL, or American Fuzzy Lop.

AFL uses three types of basic mutations:

- Deletion of a random bit.

- Insertion of a random bit.

- Flipping of a random bit (from 0 to 1 or conversely)

AFL doesn’t work with only one mutation at any given time, but distributes its resources between a couple of them, and prefers the ones with the most energy. The energy of each mutation is based on which branches in the code it runs – rarer branches, that most input passes, are assigned more energy. Each branch has a unique hash, and this allows tracking the number of executions. It’s important to mention that the initial input in this technique can come from a dictionary containing more than one variation.

Grammatical Fuzzing

You are probably aware that many programs cannot receive unstructured input, but have specific rules on how input can be formed. These rules are called the syntax of the input, since they are similar to syntactical rules in spoken languages. In order to fuzz test such programs in a way that covers most syntactical options, the fuzzer must be aware of the syntax, otherwise, input produced using simple mutations would be filtered and discarded. Such fuzzers already exist, and can fuzz JavaScript, WASM, URL and others. but as of the writing of this article, most of them are all experimental and slow, and as far as I know, all are written in Python, which is great for prototyping and demos, but not so much for optimized production-grade fuzzers. Therefore, they are rarely used.

Next – Part 2

In the next chapter “Fuzzing with AFL“, I demonstrate how to fuzz a simple program using AFL to find crashes that might be dangerous in the real world.

Want to Hear More?

A free consulting meeting included.